LIGHTNING BLOGS

Welcome to the first in my lightning blog series. This series is for the ideas and observations we all make during our daily lives and rarely share or have time to explore them.

THE RULE OF THREES, OR THE RULE OF THIRDS.

The rule of threes is an observation I made while trying to save energy and money by examining and swapping light bulbs and the mode of generating the emitted light.

Sounds great and very intellectual but as usually happens you do something and then upon reflection realise there appeared to be a pattern. I actually was just trying to swap over to the newer 6 Watt LED bulbs, I had recently found in my local supermarket, to save money because my energy bills are creeping up there and starting to hurt (mother of invention).

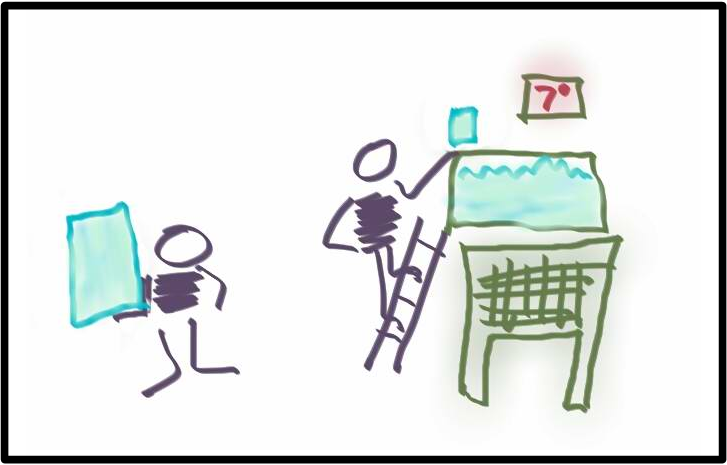

I had swapped all the lights in my house with these 6 Watt LEDs, except for the two main lights in my kitchen and the dinning area. The reason these two lights hadn’t been swapped over, was that they were 18 Watt Fluorescent tubes. Well, finally the day came and I had decided it was time to do the swap. When I began to remove the fixtures, I noticed that they were not the original fixtures but had themselves been replacements for the type of light that was there previously. The original light fixtures were actually lamp holders, just like the type of fixture I was installing, great less touch up work.

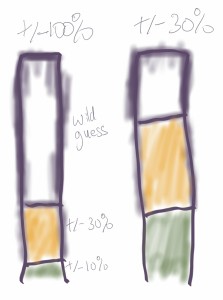

Then it struct me, when the house was built the dominant form of lighting was incandescent bulbs. Incandescent bulbs ranged usually from 100 Watts to 60 Watts in your typical single light fixture for a room. Yes they made smaller bulbs 40 Watts and even as low as 20 Watts, but these were usually the bulbs used for bedside lamps or in chandeliers. The most likely scenario was that the original lamp holder would have held a 60 Watt bulb due to the small room size.

So what we had was a situation where if they had put a smaller bulb than 60 Watts the room would be poorly lite, however at the time, the more cost effective technology of fluorescent lighting would have meant a 20 Watt florescent tube would have given more light than the 60 Watt incandescent for a third of the cost. In fact the florescent tube would have probably given out the equivalent light from a 75 Watt bulb or more.

Now the fluorescent tube I was replacing was in fact, a newer more efficient 18 Watt florescent tube. Yet it struck me that I was now installing a 6 Watt bulb that was one third the wattage of the one I was removing. I also realised that the fluorescent must have been replacing a bulb at least three time the wattage as itself.

The rule of threes or thirds was born.

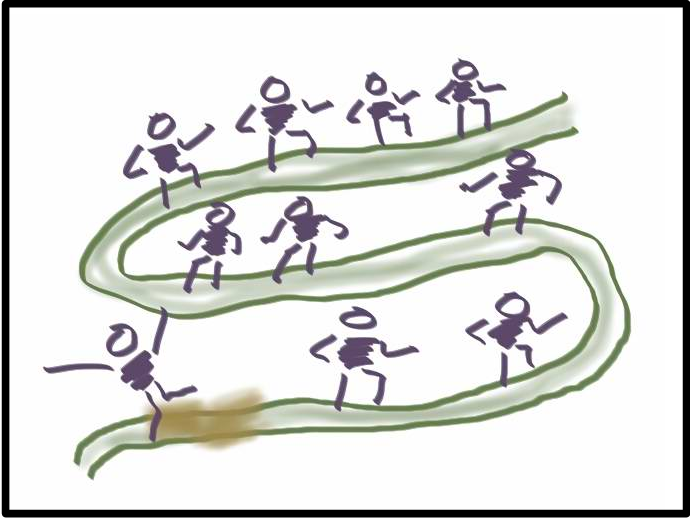

So did this mean that only when something became three times better or more efficient or used a third of the energy or effort, that we actually muster enough motivation or pressure to change?

Can the rule of threes be seen in other areas or even help us to decide when retooling or changing the way we work is most beneficial?

Like I said observations and ideas only in the Lightning Blogs. Food for thought and I hope the rule of threes or thirds may help in some way to guide you in your decision making.

Yes I know wattages do not equal Lumens but for simplicity, I didn’t go into that.

From Wikipedia : The luminous efficacy of a typical incandescent bulb is 16 lumens per watt, compared with 60 lm/W for a compact fluorescent bulb or 150 lm/W for some white LED lamps.

PS. Careful how you pronounce, The Rule of Thirds, it could be misunderstood as something else.